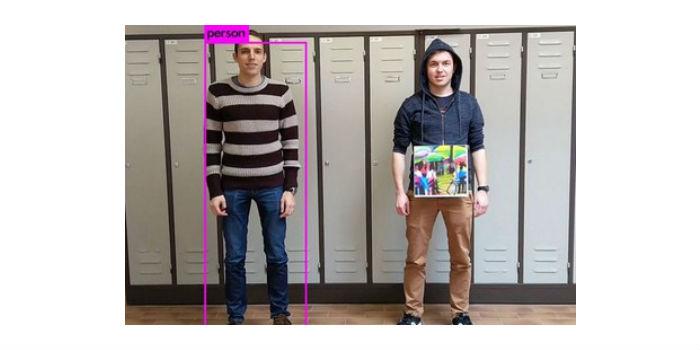

International. A cardboard sign with a colorful print. All researchers at KU Leuven's Faculty of Technology Engineering needed to fool a smart camera. To be clear: Wiebe Van Ranst, Simen Thys and Toon Goedemé, from the EAVISE research group, have no bad intentions. His research aims to expose the weaknesses of intelligent detection systems.

International. A cardboard sign with a colorful print. All researchers at KU Leuven's Faculty of Technology Engineering needed to fool a smart camera. To be clear: Wiebe Van Ranst, Simen Thys and Toon Goedemé, from the EAVISE research group, have no bad intentions. His research aims to expose the weaknesses of intelligent detection systems.

"Intelligent detection systems are based on pattern recognition," says Professor Goedemé, head of EAVISE (Integrated and Artificially Intelligent Vision Engineering) at the De Nayer campus. "They consist of a camera and software to interpret the images automatically. If you train these systems with images of different people for a while, they learn to recognize people and distinguish them from objects. Although we differ in height, hair color or face, the algorithm identifies us as human beings."

"This makes intelligent detection systems very suitable for security purposes. They automatically give a signal as soon as the cameras detect an intruder, even when that person tries to hide. In the past, you needed security guards for that, and they would be staring at screens for hours on end. A tedious job that is becoming a thing of the past."

Millions of parameters

However, intelligent detection systems are not foolproof. Sometimes they have difficulty detecting certain patterns. Researchers around the world are trying to expose the Achilles' heel of detection systems. Small changes are enough to do this. Fake glasses made of cardboard, for example, are enough to confuse a facial recognition system.

Professor Goedemé and his team have taken things a step further. Master's student Simen Thys and postdoc Wiebe Van Ranst managed to fool YOLO, one of the most popular algorithms for detecting objects and people.

The researchers held up a 40-by-40 cm cardboard poster, with a colorful print, in front of his body. That was enough to fool YOLO: carrying the sign makes you invisible to the system.

Very remarkable, according to Professor Goedemé: "In previous tests, people wore a T-shirt with the image of a bird. The algorithm did not recognize a person, but detected a bird. Our pattern, which was designed using artificial intelligence, makes people invisible. If you carry the sign, the system does not detect you, either as a human being or as an object. Remarkable, and we don't know exactly why this particular pattern can fool YOLO. After all, algorithms based on neural networks use millions of parameters. For researchers, this is still a black box."

Arms race

Researchers are excited, but are quick to warn of other security flaws.

"Where to go from here? That's easy: we found a weakness, and now it needs to be repaired. In this case, it could teach YOLO's algorithm that the people holding a sign with this particular pattern are also human beings. That's not hard to do. However, it's safe to assume that YOLO has other weaknesses as well. Will we ever be able to fix all the security flaws? I don't think so. I already mentioned it: such an algorithm is a black box. This is the beginning of an arms race."

"We didn't expect this kind of exaggeration. But we see why the app appeals to the imagination. The idea that you can make yourself invisible to security cameras by using nothing but a colorful sign is intriguing."

Video

Source: KU Leuven.

Leave your comment