International. Researchers from HSE University and Nizhny Novgorod State University of Linguistics (LUNN) have developed a new ai-based method for collecting voice biometric data by ensuring the quality of automatic voice recordings.

International. Researchers from HSE University and Nizhny Novgorod State University of Linguistics (LUNN) have developed a new ai-based method for collecting voice biometric data by ensuring the quality of automatic voice recordings.

The method involves a noise-resistant algorithm of 10 dB or higher that can work in real time and could have significant implications for speech recognition.

The researcher's findings are presented in a new paper published in Measurement Techniques titled "A Method for Measuring the Pitch Frequency of Voice Signals for Acoustic Speech Analysis Systems." The low quality of voice reference templates, usually due to ambient noise, is a limiting factor for the widespread adoption of voice identification systems, according to the announcement.

The method proposed by Professor Andrey Savchenko of HSE University and Professor Vladimir Savchenko of LUNN can reduce the error rate of voice identification systems to 2 percent to a signal-to-noise ratio of 10 dB or more, they say.

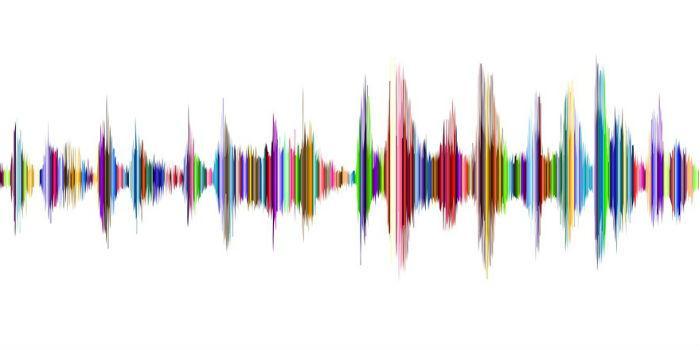

In addition, the researchers proposed using an algorithm that divides recorded speech into short frames, measuring the pitch frequency in each of them. Its software evaluates the stability of pronunciation against its average level and shows the dependence on speech quality measured over time as a color chart.

The system treats the initial parts of a recording as a template, giving them 100% quality. If the estimated pitch frequencies of the following voice frames are more or less stabilized, the recording will look of good quality. If there is a wide range in values, the record will be considered defective. Such failures can be caused by an interfering voice with a different pitch frequency.

A major Russian bank reportedly has an interest in the technology and has provided recordings from its voice database for initial testing.

The global voice and voice recognition market is recently forecast to grow by more than US$28 billion by 2026.

Leave your comment